Free Helpline

We are here to assist you.

Health Advisor

+91-8877772277Available 7 days a week

10:00 AM – 6:00 PM to support you with urgent concerns and guide you toward the right care.

We are here to assist you.

Health Advisor

+91-8877772277Available 7 days a week

10:00 AM – 6:00 PM to support you with urgent concerns and guide you toward the right care.

Understand the peripheral blood smear test for malaria diagnosis, its procedure, and why it's a vital tool in combating this disease.

Malaria, a potentially fatal mosquito-borne illness, persists as a significant global health challenge. Swift and precise identification of the condition is absolutely essential for effective treatment and the prevention of severe complications.

Among the various methods of detection available, the examination of a blood film maintains its status as a gold standard, particularly in regions with limited resources where advanced testing technologies might not be readily accessible. This approach, indeed, has proven its enduring value over time.

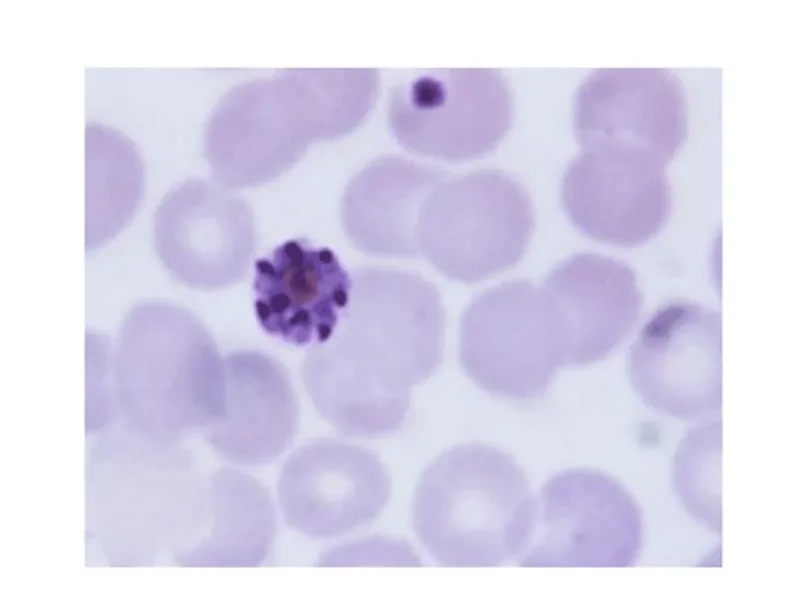

This technique involves the microscopic examination of a small sample of blood, typically obtained from a fingertip prick, spread thinly on a glass slide. Medical professionals then meticulously search for the presence of Plasmodium species within the red blood cells.

The morphology and distinct characteristics of these organisms, along with their location inside the cells, can aid in identifying the specific Plasmodium species responsible for the infection. Identifying the specific species proves essential, as different types of Plasmodium vary in severity and necessitate distinct approach strategies.

That's the part worth remembering.

The blood film examination offers both qualitative (determining the presence or absence of the causative agents) and quantitative (assessing pathogen density) assessment. High densities of the pathogens are frequently linked to more severe disease and a poorer prognosis.

Quantifying the pathogenic load, therefore, offers valuable prognostic information.

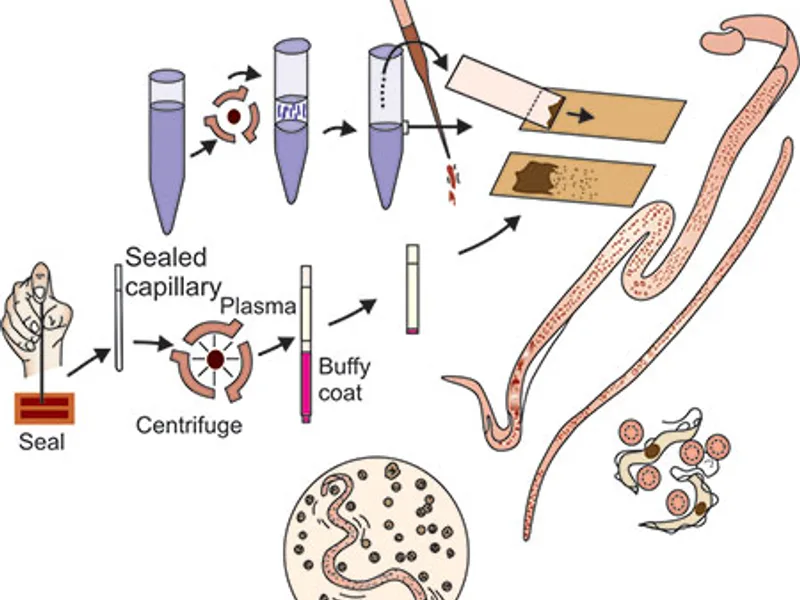

The blood film test is a relatively straightforward procedure; however, it demands skilled hands and keen observation. Here is a breakdown of the typical process involved:

A small drop of blood is obtained from a patient, usually via a finger prick using a sterile lancet. In some cases, particularly with infants or when venous blood is preferred, a sample may be drawn from a vein.

The collected blood droplet is carefully spread onto a clean glass microscope slide. Typically, two types of preparations are created:

Thin Film: A single layer of red blood cells is formed, enabling a detailed examination of the morphology of the pathogens within individual cells. This is excellent for species identification.

Thick Film: A larger volume of blood is used and dried, which causes the red blood cells to lyse. This process concentrates the disease-causing organisms, making them easier to spot, especially in instances of low parasitemia.

Creating both thin and thick preparations significantly enhances the detection yield of the test.

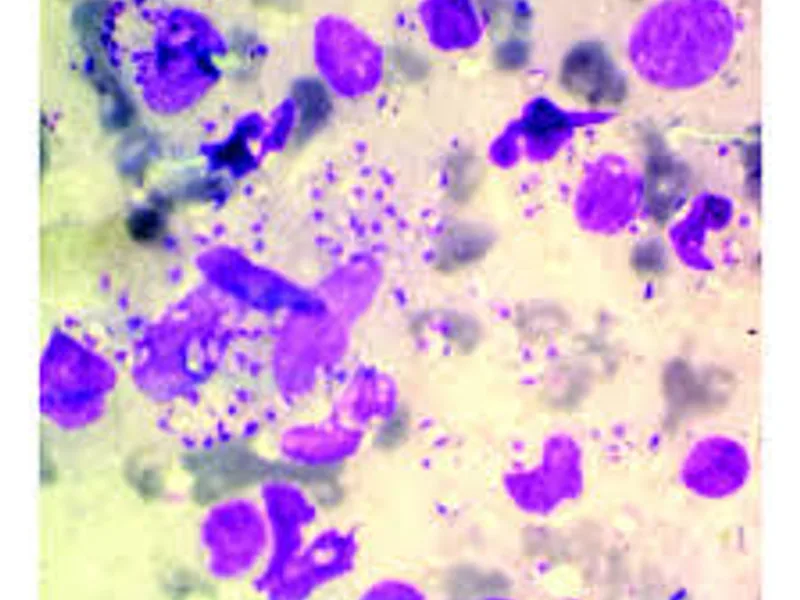

Once prepared, the slides undergo staining, most commonly with Giemsa stain or Wright's stain. These stains are critical because they color the various components of the blood cells and the Plasmodium species, rendering them visible under the microscope.

The staining process highlights the characteristic features of the organisms, such as their size, shape, color, and the presence of a nucleus and cytoplasm.

This constitutes the most critical phase. A trained microscopist examines the stained slides under a high-power light microscope. They systematically scan the slide, searching for Plasmodium species either within or outside the red blood cells. The search involves examining numerous fields of view to ensure thoroughness.

This is where most patients struggle.

The microscopist looks for specific indicators: the stage of the pathogen (ring, trophozoite, schizont, gametocyte), its size relative to the red blood cell, the presence of stippling (dots) within the red blood cell, and the overall appearance of the infected cell. Differentiating between the various Plasmodium species based on these subtle morphological differences requires extensive training and experience.

Despite the emergence of newer methods of detection, the blood film test continues to be a crucial instrument in the fight against malaria for several compelling reasons:

In many tropical and subtropical regions where malaria is endemic, access to sophisticated testing equipment like PCR machines or rapid diagnostic tests (RDTs) can be limited due to cost or infrastructure challenges. The blood film examination, requiring only basic laboratory equipment (microscope, stains, slides) and trained personnel, is significantly more accessible and cost-reliable.

This makes it an indispensable method of detection for widespread screening and identification of the illness.

As previously noted, the ability to identify the specific Plasmodium species represents a key advantage. For instance, Plasmodium falciparum causes the most severe form of malaria, and its prompt identification enables immediate, aggressive management. Conversely, other species like Plasmodium vivax and Plasmodium ovale can lead to relapsing malaria due to dormant liver stages, necessitating different therapeutic strategies. On top of that,, the thick film allows for the quantification of pathogen density, a crucial indicator of disease severity that helps guide therapy intensity. This detailed information empowers physicians to tailor care effectively.

It is not uncommon for individuals to be simultaneously infected with more than one species of Plasmodium. The blood film examination, with its capacity to visualize the pathogens directly, excels at detecting these mixed infections, which some other methods of detection might miss.

Recognizing a mixed infection is important because the overall pathogenic burden and potential for severe disease could be higher.

Even when RDTs are employed, a positive or equivocal result may warrant confirmation via blood film. Similarly, a negative RDT in a patient with a strong clinical suspicion for malaria can be further investigated with a blood film. It functions as a reliable backup and confirmation tool, ensuring accuracy in detection.

This is where most people struggle.

The blood film examination serves as a fundamental training tool for laboratory technicians and medical professionals involved in infectious disease identification. Mastering the technique builds essential skills in microscopy and pathogen identification, which are transferable to the detection of other blood-borne pathogens and infections.

While highly valuable, the blood film test is not without its challenges:

The accuracy of the test heavily depends on the skill and experience of the microscopist. Inconsistent staining, poor slide preparation, or fatigue can lead to false-negative or false-positive results.

This human element introduces a degree of subjectivity that other automated tests may avoid. Proficiency testing and continuous training are paramount.

Detecting very low levels of parasitemia (fewer than 100 pathogens per microliter of blood) can be challenging, especially on a thin film. While thick films improve sensitivity, even they may miss infections with extremely low pathogen counts, potentially leading to delayed identification in some individuals.

The process of preparing, staining, and meticulously examining a blood film can be time-consuming, particularly when dealing with a high volume of samples. This can create a bottleneck in busy clinical settings where rapid test results are often desired.

And yet, so many people miss it.

The test is specifically designed to detect Plasmodium species. It cannot provide information about other potential causes of fever or symptoms that might mimic malaria. Further investigations would be necessary in such scenarios.

The landscape of malaria identification is evolving. Rapid diagnostic tests (RDTs) offer speed and ease of use, while molecular methods like PCR provide unparalleled sensitivity and specificity, along with the ability to detect genetic mutations related to drug resistance. However, the examination of blood films continues to hold its ground.

For many parts of the world, the blood film examination remains the most practical and reliable method for detecting malaria. Its continued importance is undeniable, especially in regions grappling with limited resources.

Efforts are ongoing to elevate the standardization and quality of microscopy through training programs and external quality assurance schemes.

Innovative approaches, such as smartphone-based microscopy and automated slide-reading systems, are being developed to enhance the efficiency and accuracy of blood film analysis, potentially bridging the gap between traditional microscopy and advanced technologies. These advancements aim to make malaria identification even more robust and accessible globally.

Recovery is rarely linear.

The blood film test is a fundamental tool for detecting malaria. Its accessibility, cost-effectiveness, and ability to identify Plasmodium species and quantify parasitemia make it indispensable, particularly in endemic regions. Despite the emergence of newer technologies, its role remains notable.

Understanding its procedure, strengths, and limitations is key for healthcare providers and public health officials working to control and eliminate malaria worldwide. This tried-and-true method continues to be a vital weapon in our arsenal against this pervasive disease.

Understand the peripheral blood smear test for malaria diagnosis, its procedure, and what results mean for patients.

April 20, 2026

Discover how specialized staining techniques, like Giemsa, are crucial for accurately diagnosing Leishmaniasis, identifying the parasite, and guiding timely treatment. Understand this key diagnostic approach.

April 20, 2026

Understand the wet mount parasite test: what it is, why it's done, and how it helps diagnose infections for better health outcomes.

April 20, 2026